Updated:

Readtime: 4 min

Every product is carefully selected by our editors and experts. If you buy from a link, we may earn a commission. Learn more. For more information on how we test products, click here.

Hot off the spectacular success of ChatGPT, developer OpenAI has unveiled GPT-4, the successor to GPT-3.5 and “the latest milestone in OpenAI’s effort in scaling up deep learning”. ChatGPT has been the talk of the technology town since it was released, significantly accelerating the development and adoption of artificial intelligence (AI), but it too suffers from challenges, many of which OpenAI aims to solve with GPT-4.

According to OpenAI, this new successor is a large multimodal model capable of accepting image and text inputs and generating text outputs. Similar to ChatGPT, this new model is a type of generative AI that takes advantage of algorithms and predictive text to produce new content based on prompts. Even though it’s “less capable than humans in many real-world scenarios”, OpenAI claims that the AI language model can exhibit near human-level performance on a magnitude of professional and academic benchmarks.

The company says that while the improvements of GPT-4 over its predecessor are subtle, the difference becomes quite apparent “when the complexity of the task reaches a sufficient threshold”. GPT-4 is said to be more reliable, creative, and competent to manage much more nuanced instructions than GPT-3.5.

The new model can process up to 25,000 words, roughly eight times as many as ChatGPT. OpenAI tested the model on various benchmarks, including simulated exams designed for humans, and found that GPT-4 outperformed existing large language models. It even managed to beat the English-language performance of GPT-3.5 and other LLMs and performed excellently in languages other than English, including low-resource languages such as Latvian, Welsh, and Swahili. One of the new features includes the capability of accepting both text and images as input (GPT-3.5 only accepted text). The model will be able to generate text outputs based on inputs comprising both text and images.

In one of the examples, the chatbot is asked to explain a meme by giving it a picture of the said meme as input. The language model is not only able to understand the context but also flawlessly and in detail breaks down the whole meme and explains what’s going on. OpenAI says it spent six months “iteratively aligning” GPT-4 using lessons from an internal adversarial testing program as well as ChatGPT, resulting in “best-ever results” on factuality, steerability and refusing to go outside of guardrails, according to the company.

The company worked with Microsoft to develop a “supercomputer” from scratch in the Azure cloud, which was used to train GPT-4. Another great improvement in GPT-4 is the aforementioned steerability. GPT-4 will allow users to define their AI’s style and task by conveying those directions in the “system” message. System messages are basically instructions that establish the tone and boundaries for the AI’s next interactions. These are slated to come to ChatGPT sometime in the future as well.

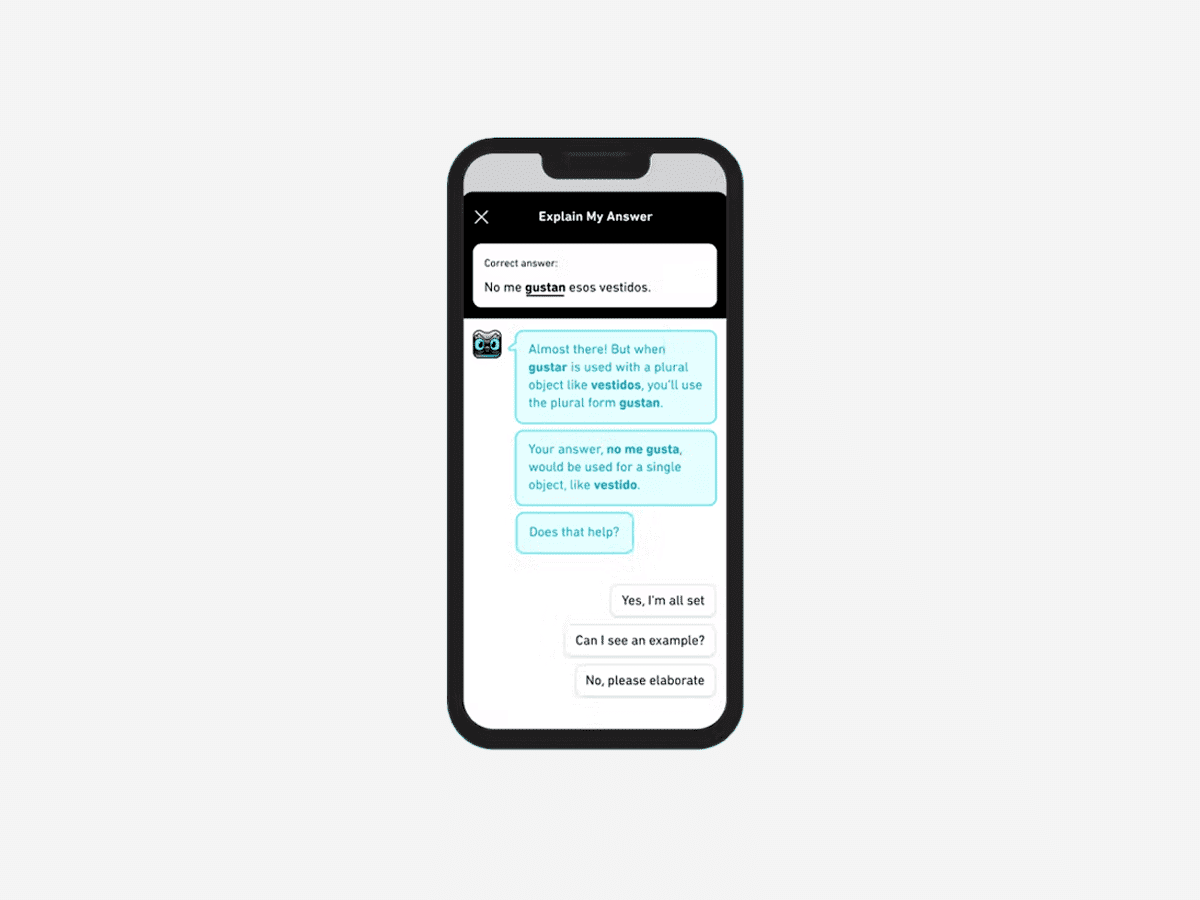

In its blog, OpenAI presents us with an example of a system message which might read: “You are a tutor that always responds in the Socratic style. You never give the student the answer, but always try to ask just the right question to help them learn to think for themselves. You should always tune your question to the interest and knowledge of the student, breaking down the problem into simpler parts until it’s at just the right level for them.”

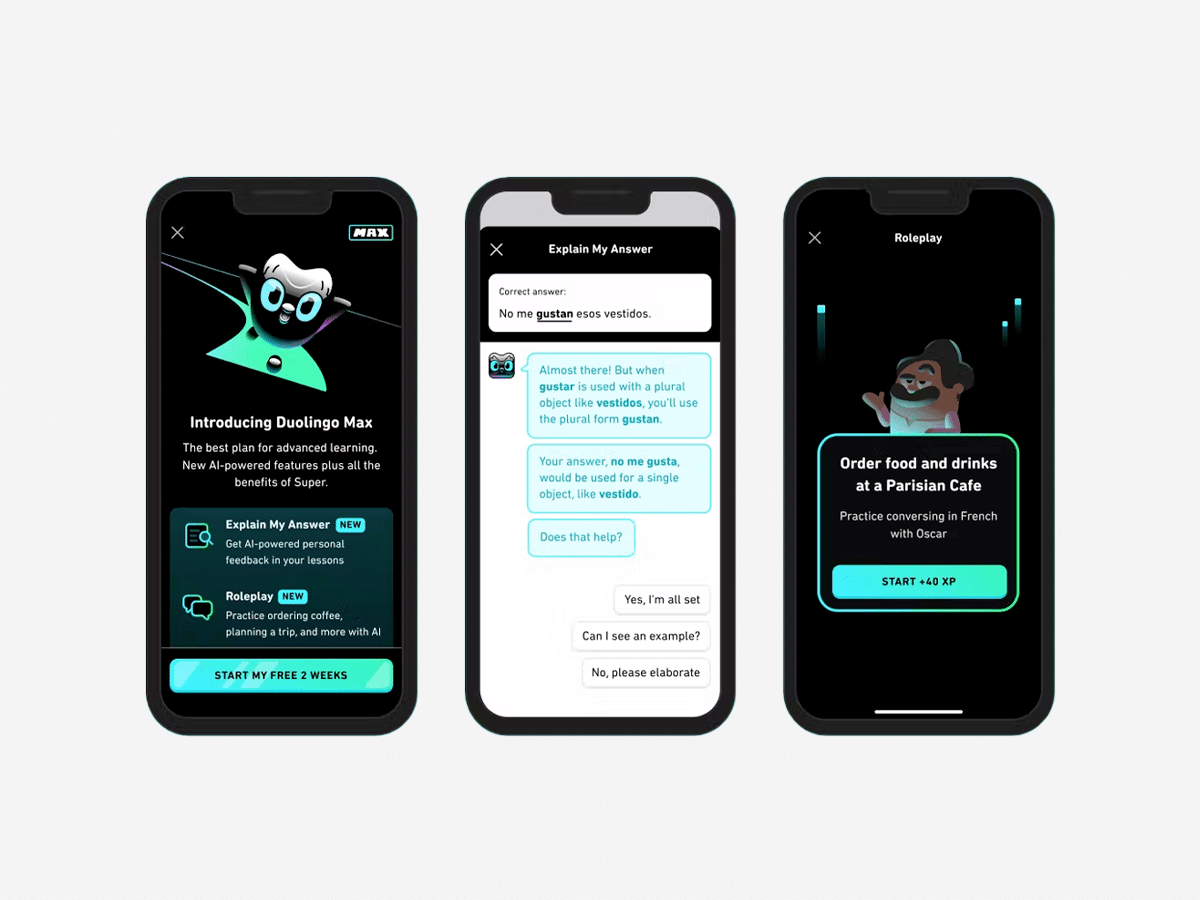

GPT-4 is now available to OpenAI’s paying users via ChatGPT Plus (with a usage cap), and developers can sign up on a waitlist to access the API. Microsoft has confirmed the new Bing search has already been taking advantage of GPT-4, while Stripe is also using GPT-4 to scan business websites and deliver a summary to customer support staff. Other companies quickly embracing the tech are Duolingo, Morgan Stanley and Khan Academy.

While GPT-4 marks a massive step up in both performance and capabilities, it has its fair share of issues. It still “hallucinates” facts and makes reasoning errors, sometimes with great confidence. In one of the examples, GPT-4 described Elvis Presley as the “son of an actor”, which is an evident slip-up. It also possesses no knowledge about events “that have occurred after the vast majority of its data cuts off” in September 2021 and does not learn from its experience.

“GPT-4 generally lacks knowledge of events that have occurred after the vast majority of its data cuts off (September 2021), and does not learn from its experience,” OpenAI noted. “It can sometimes make simple reasoning errors which do not seem to comport with competence across so many domains, or be overly gullible in accepting obvious false statements from a user. And sometimes it can fail at hard problems the same way humans do, such as introducing security vulnerabilities into code it produces.”

Comments

We love hearing from you. or to leave a comment.